from importlib.metadata import version

print("torch version:", version("torch"))

print("tiktoken version:", version("tiktoken"))torch version: 2.5.1

tiktoken version: 0.7.0Packages that are being used in this notebook:

In [1]:

In [2]:

ssl.SSLCertVerificationError when executing the previous code cell, it might be due to using an outdated Python version; you can find more information here on GitHub)In [3]:

In [4]:

In [5]:

In [6]:

In [7]:

In [8]:

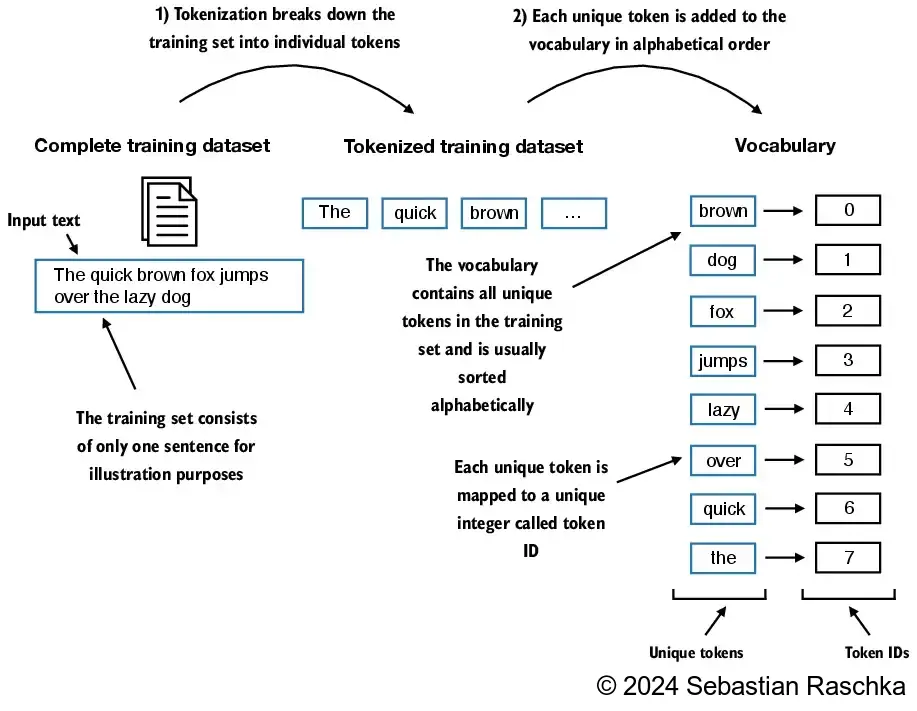

['I', 'HAD', 'always', 'thought', 'Jack', 'Gisburn', 'rather', 'a', 'cheap', 'genius', '--', 'though', 'a', 'good', 'fellow', 'enough', '--', 'so', 'it', 'was', 'no', 'great', 'surprise', 'to', 'me', 'to', 'hear', 'that', ',', 'in']

In [12]:

('!', 0)

('"', 1)

("'", 2)

('(', 3)

(')', 4)

(',', 5)

('--', 6)

('.', 7)

(':', 8)

(';', 9)

('?', 10)

('A', 11)

('Ah', 12)

('Among', 13)

('And', 14)

('Are', 15)

('Arrt', 16)

('As', 17)

('At', 18)

('Be', 19)

('Begin', 20)

('Burlington', 21)

('But', 22)

('By', 23)

('Carlo', 24)

('Chicago', 25)

('Claude', 26)

('Come', 27)

('Croft', 28)

('Destroyed', 29)

('Devonshire', 30)

('Don', 31)

('Dubarry', 32)

('Emperors', 33)

('Florence', 34)

('For', 35)

('Gallery', 36)

('Gideon', 37)

('Gisburn', 38)

('Gisburns', 39)

('Grafton', 40)

('Greek', 41)

('Grindle', 42)

('Grindles', 43)

('HAD', 44)

('Had', 45)

('Hang', 46)

('Has', 47)

('He', 48)

('Her', 49)

('Hermia', 50)

In [13]:

class SimpleTokenizerV1:

def __init__(self, vocab):

self.str_to_int = vocab

self.int_to_str = {i:s for s,i in vocab.items()}

def encode(self, text):

preprocessed = re.split(r'([,.:;?_!"()\']|--|\s)', text)

preprocessed = [

item.strip() for item in preprocessed if item.strip()

]

ids = [self.str_to_int[s] for s in preprocessed]

return ids

def decode(self, ids):

text = " ".join([self.int_to_str[i] for i in ids])

# Replace spaces before the specified punctuations

text = re.sub(r'\s+([,.?!"()\'])', r'\1', text)

return textencode function turns text into token IDsdecode function turns token IDs back into text

In [14]:

In [15]:

In [16]:

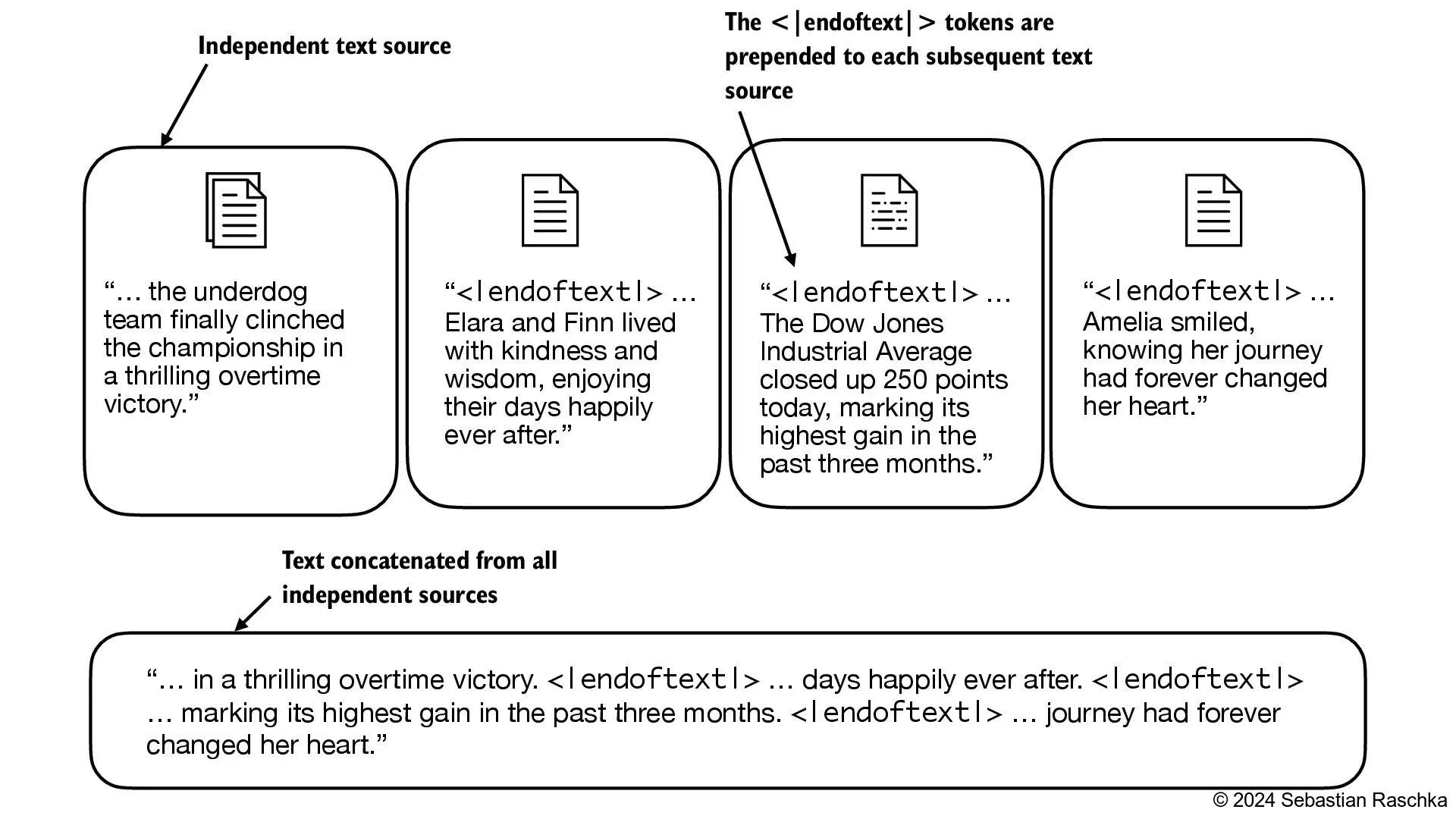

Some tokenizers use special tokens to help the LLM with additional context

Some of these special tokens are

[BOS] (beginning of sequence) marks the beginning of text[EOS] (end of sequence) marks where the text ends (this is usually used to concatenate multiple unrelated texts, e.g., two different Wikipedia articles or two different books, and so on)[PAD] (padding) if we train LLMs with a batch size greater than 1 (we may include multiple texts with different lengths; with the padding token we pad the shorter texts to the longest length so that all texts have an equal length)[UNK] to represent words that are not included in the vocabulary

Note that GPT-2 does not need any of these tokens mentioned above but only uses an <|endoftext|> token to reduce complexity

The <|endoftext|> is analogous to the [EOS] token mentioned above

GPT also uses the <|endoftext|> for padding (since we typically use a mask when training on batched inputs, we would not attend padded tokens anyways, so it does not matter what these tokens are)

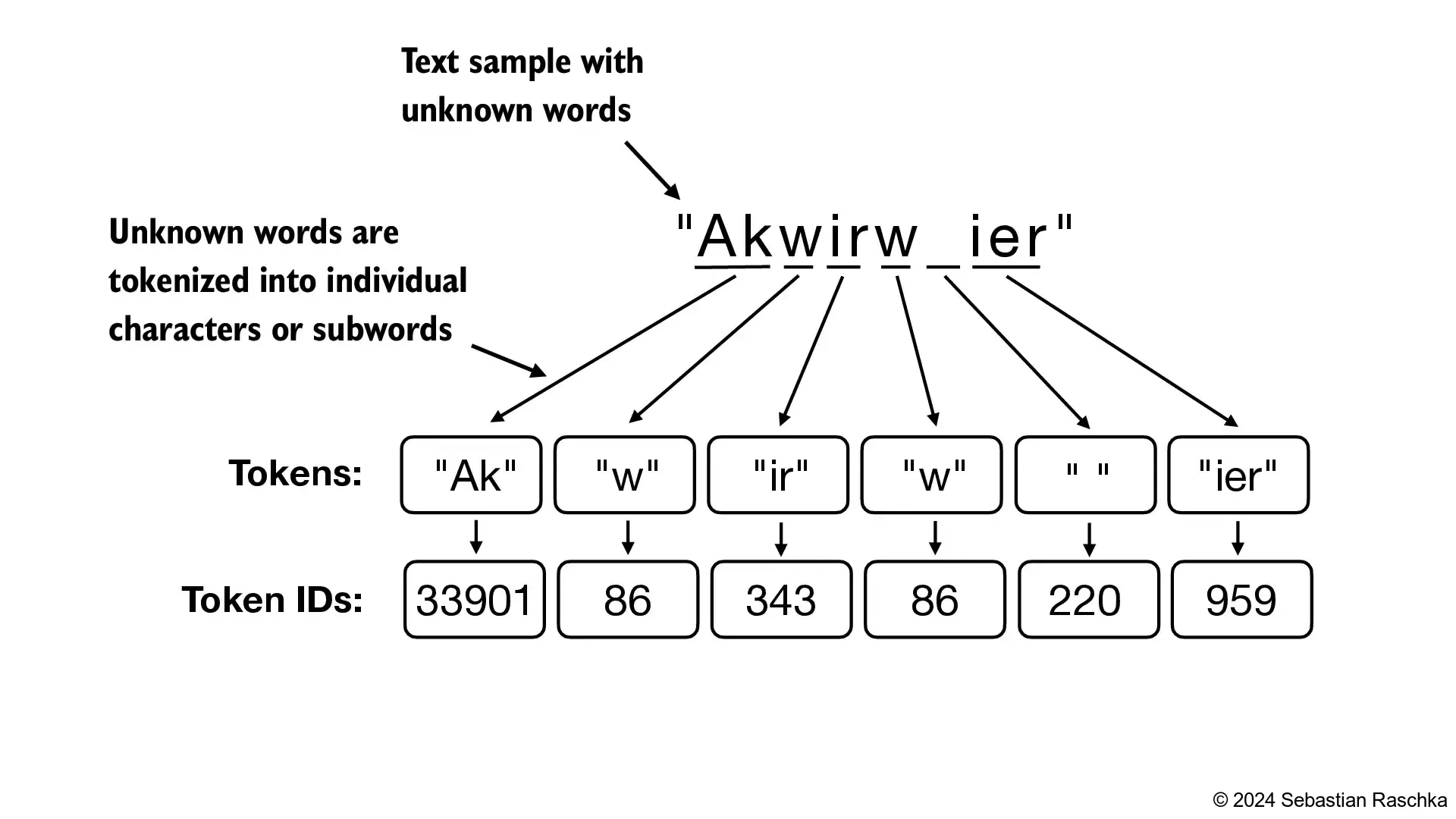

GPT-2 does not use an <UNK> token for out-of-vocabulary words; instead, GPT-2 uses a byte-pair encoding (BPE) tokenizer, which breaks down words into subword units which we will discuss in a later section

<|endoftext|> tokens between two independent sources of text:

In [17]:

--------------------------------------------------------------------------- KeyError Traceback (most recent call last) Cell In[17], line 5 1 tokenizer = SimpleTokenizerV1(vocab) 3 text = "Hello, do you like tea. Is this-- a test?" ----> 5 tokenizer.encode(text) Cell In[13], line 12, in SimpleTokenizerV1.encode(self, text) 7 preprocessed = re.split(r'([,.:;?_!"()\']|--|\s)', text) 9 preprocessed = [ 10 item.strip() for item in preprocessed if item.strip() 11 ] ---> 12 ids = [self.str_to_int[s] for s in preprocessed] 13 return ids Cell In[13], line 12, in <listcomp>(.0) 7 preprocessed = re.split(r'([,.:;?_!"()\']|--|\s)', text) 9 preprocessed = [ 10 item.strip() for item in preprocessed if item.strip() 11 ] ---> 12 ids = [self.str_to_int[s] for s in preprocessed] 13 return ids KeyError: 'Hello'

"<|unk|>" to the vocabulary to represent unknown words"<|endoftext|>" which is used in GPT-2 training to denote the end of a text (and it’s also used between concatenated text, like if our training datasets consists of multiple articles, books, etc.)In [18]:

In [20]:

<unk> tokenIn [21]:

class SimpleTokenizerV2:

def __init__(self, vocab):

self.str_to_int = vocab

self.int_to_str = { i:s for s,i in vocab.items()}

def encode(self, text):

preprocessed = re.split(r'([,.:;?_!"()\']|--|\s)', text)

preprocessed = [item.strip() for item in preprocessed if item.strip()]

preprocessed = [

item if item in self.str_to_int

else "<|unk|>" for item in preprocessed

]

ids = [self.str_to_int[s] for s in preprocessed]

return ids

def decode(self, ids):

text = " ".join([self.int_to_str[i] for i in ids])

# Replace spaces before the specified punctuations

text = re.sub(r'\s+([,.:;?!"()\'])', r'\1', text)

return textLet’s try to tokenize text with the modified tokenizer:

In [22]:

In [23]:

In [24]:

In [26]:

In [28]:

In [29]:

In [30]:

In [32]:

In [33]:

In [34]:

In [36]:

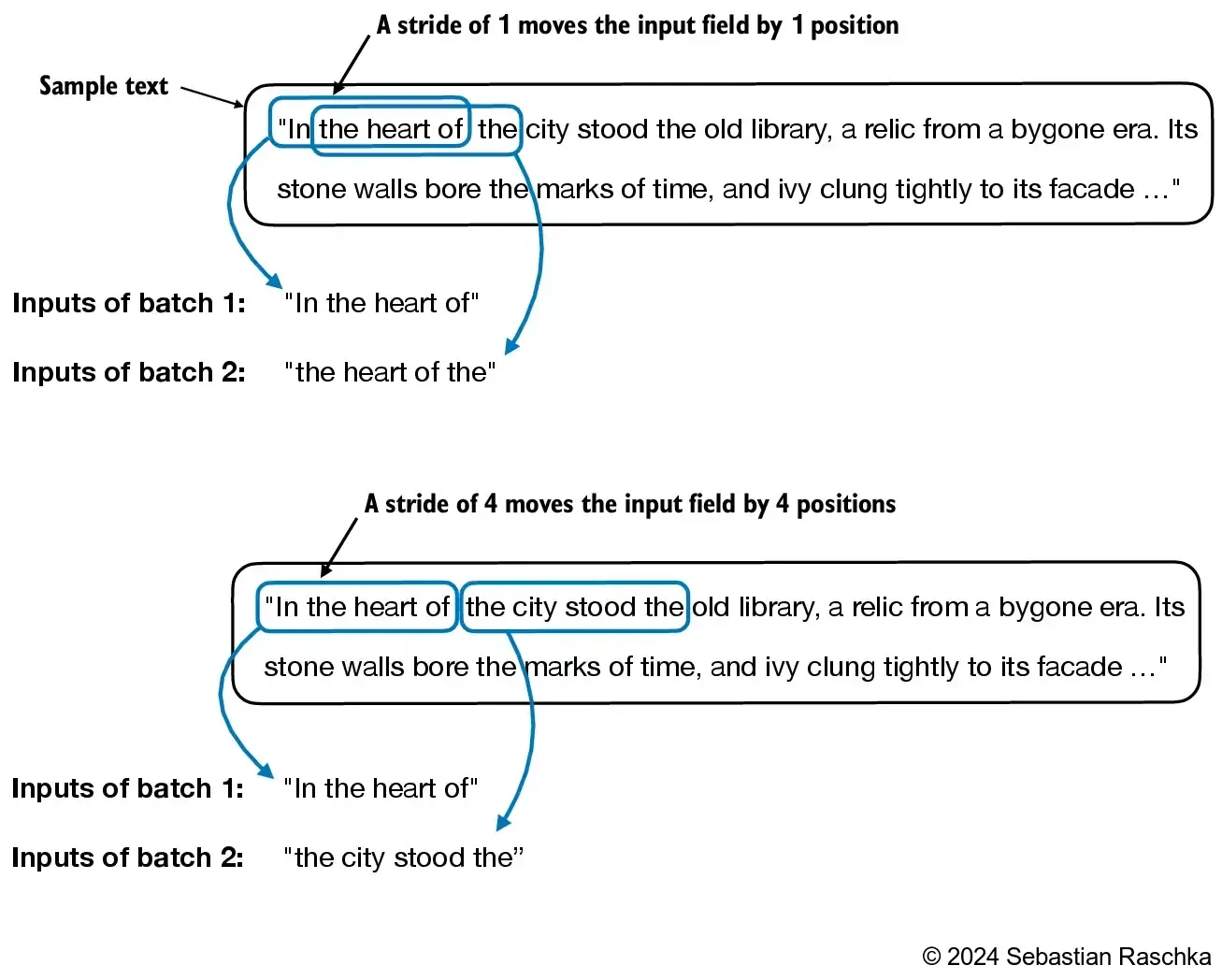

from torch.utils.data import Dataset, DataLoader

class GPTDatasetV1(Dataset):

def __init__(self, txt, tokenizer, max_length, stride):

self.input_ids = []

self.target_ids = []

# Tokenize the entire text

token_ids = tokenizer.encode(txt, allowed_special={"<|endoftext|>"})

assert len(token_ids) > max_length, "Number of tokenized inputs must at least be equal to max_length+1"

# Use a sliding window to chunk the book into overlapping sequences of max_length

for i in range(0, len(token_ids) - max_length, stride):

input_chunk = token_ids[i:i + max_length]

target_chunk = token_ids[i + 1: i + max_length + 1]

self.input_ids.append(torch.tensor(input_chunk))

self.target_ids.append(torch.tensor(target_chunk))

def __len__(self):

return len(self.input_ids)

def __getitem__(self, idx):

return self.input_ids[idx], self.target_ids[idx]In [37]:

def create_dataloader_v1(txt, batch_size=4, max_length=256,

stride=128, shuffle=True, drop_last=True,

num_workers=0):

# Initialize the tokenizer

tokenizer = tiktoken.get_encoding("gpt2")

# Create dataset

dataset = GPTDatasetV1(txt, tokenizer, max_length, stride)

# Create dataloader

dataloader = DataLoader(

dataset,

batch_size=batch_size,

shuffle=shuffle,

drop_last=drop_last,

num_workers=num_workers

)

return dataloaderIn [39]:

In [40]:

In [41]:

Inputs:

tensor([[ 40, 367, 2885, 1464],

[ 1807, 3619, 402, 271],

[10899, 2138, 257, 7026],

[15632, 438, 2016, 257],

[ 922, 5891, 1576, 438],

[ 568, 340, 373, 645],

[ 1049, 5975, 284, 502],

[ 284, 3285, 326, 11]])

Targets:

tensor([[ 367, 2885, 1464, 1807],

[ 3619, 402, 271, 10899],

[ 2138, 257, 7026, 15632],

[ 438, 2016, 257, 922],

[ 5891, 1576, 438, 568],

[ 340, 373, 645, 1049],

[ 5975, 284, 502, 284],

[ 3285, 326, 11, 287]])

In [43]:

In [44]:

In [45]:

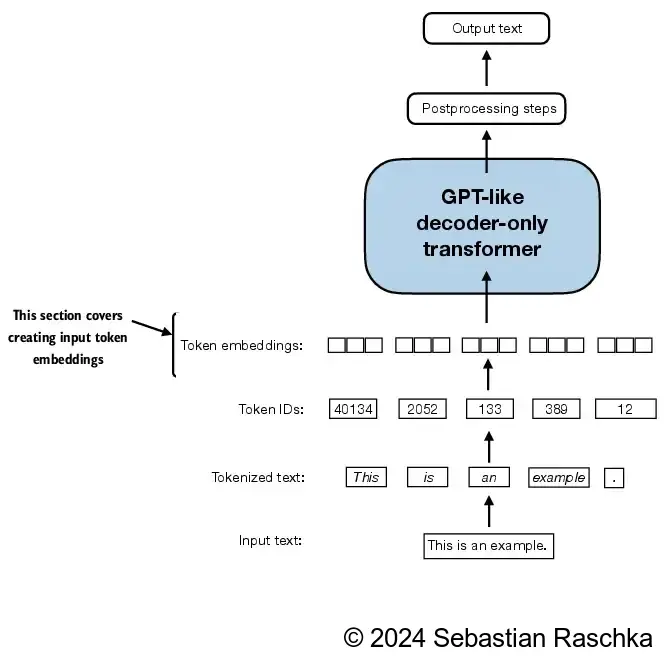

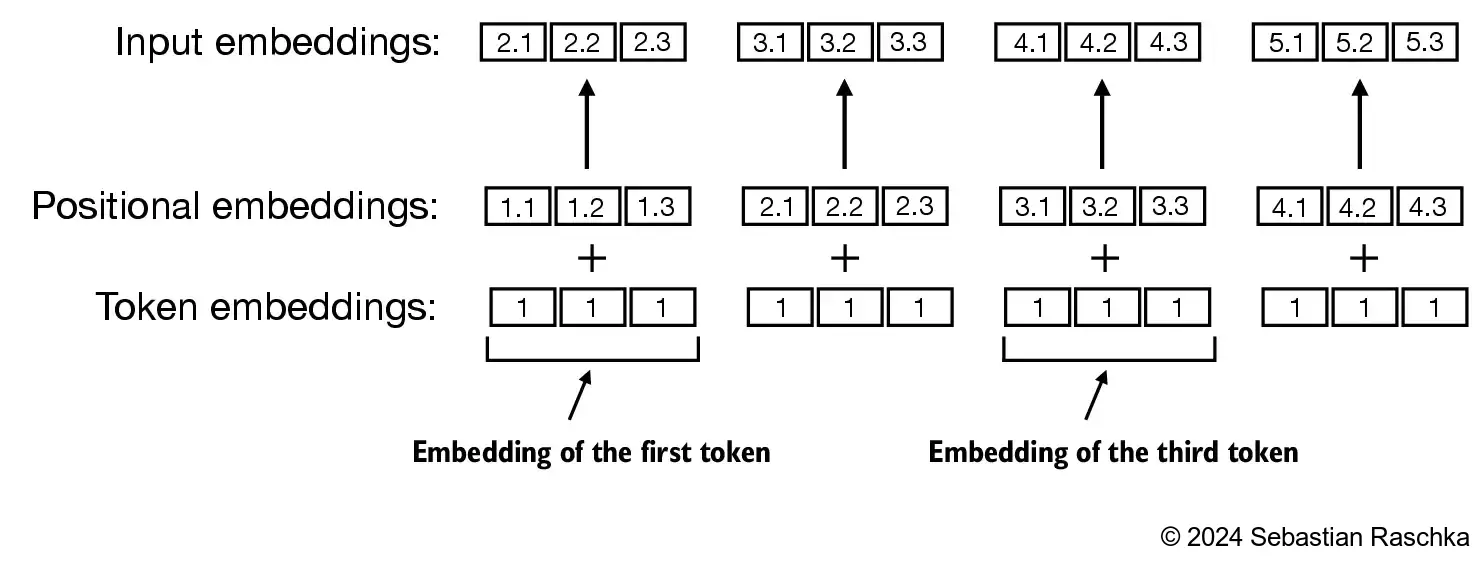

embedding_layer weight matrixinput_ids values above, we doIn [46]:

In [47]:

In [48]:

In [49]:

Token IDs:

tensor([[ 40, 367, 2885, 1464],

[ 1807, 3619, 402, 271],

[10899, 2138, 257, 7026],

[15632, 438, 2016, 257],

[ 922, 5891, 1576, 438],

[ 568, 340, 373, 645],

[ 1049, 5975, 284, 502],

[ 284, 3285, 326, 11]])

Inputs shape:

torch.Size([8, 4])In [50]:

In [51]:

In [52]:

In [53]:

See the ./dataloader.ipynb code notebook, which is a concise version of the data loader that we implemented in this chapter and will need for training the GPT model in upcoming chapters.

See ./exercise-solutions.ipynb for the exercise solutions.

See the Byte Pair Encoding (BPE) Tokenizer From Scratch notebook if you are interested in learning how the GPT-2 tokenizer can be implemented and trained from scratch.